The Agent Roundup / gpt

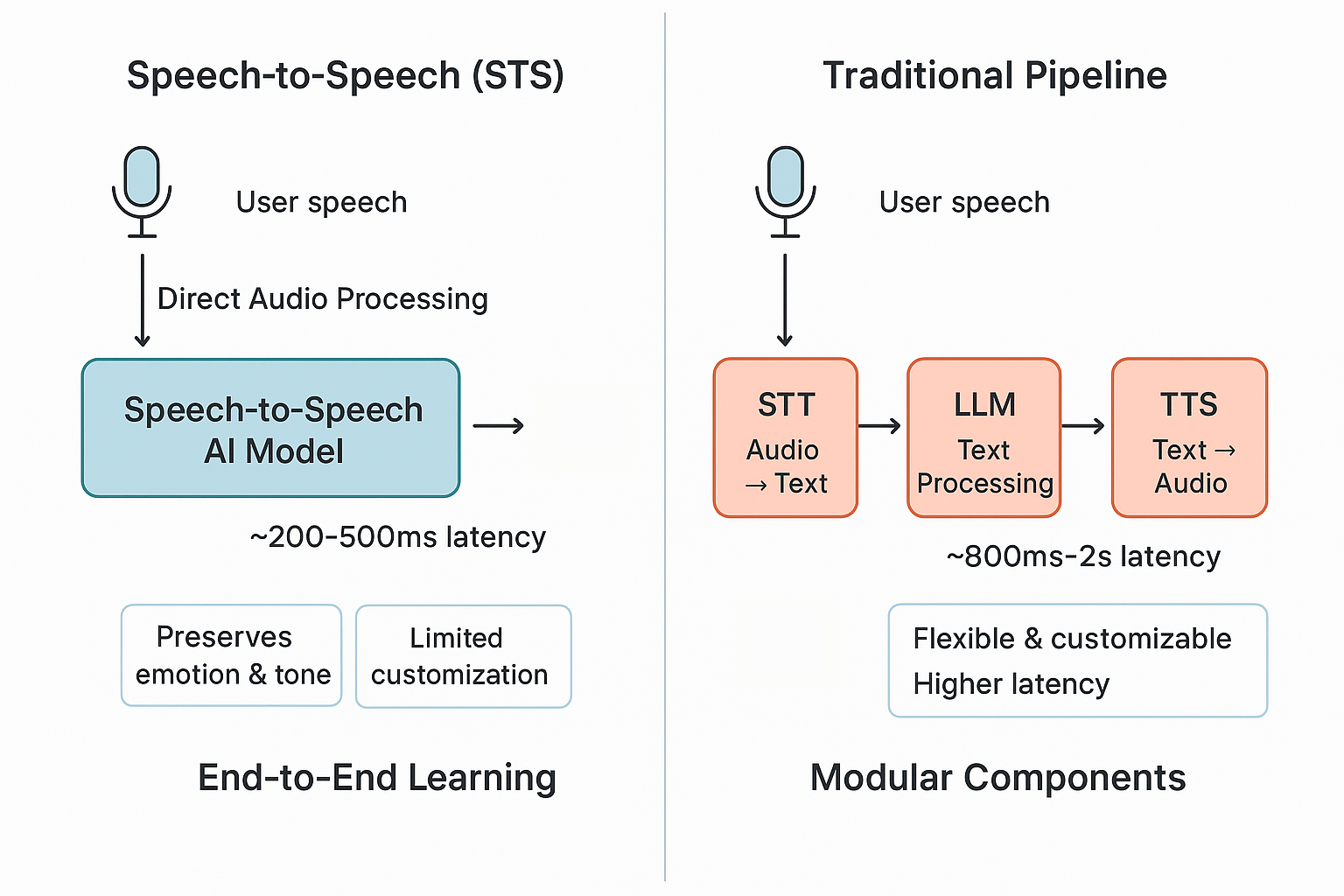

The “AI part” of voice agents usually consists of three components:

A speech-to-text model that receives recorded audio from the user and converts it to text. You could call this step transcription.

The transcribed text is then processed by a large language model. The LLM generates a response text. E.g., user asks “where is my order?”. LLM looks up order information (tool use) and replies.

The LLM response is converted back to speech using a text-to-speech model. The audio is played.

An alternative, much simpler approach is using a speech-to-speech model. This basically combines all three steps into one. The model receives audio and responds with audio while doing the computation.

Stick with the proven STT→LLM→TTS pipeline or deploy speech-to-speech (STS) models? Here are some nuances you should consider:

Speech-to-Speech (STS) Models

Pros:

Superior conversational flow - Handles interruptions, overlapping speech, and natural speech patterns apart from text (like a laugh)

Emotional intelligence - Preserves tone, inflection, and vocal cues for more empathetic customer interactions

Reduced latency - Direct audio processing is much faster because it eliminates multi-step delays

Multilingual voice consistency - It can switch languages mid-sentence while maintaining speaker tone and pacing

Cons:

Limited customization - At the moment, there are fewer voice options and minimal brand voice alignment compared to mature TTS libraries

Restricted language support - Currently supports far fewer languages and regional accents than established TTS systems

Vendor lock-in risk - End-to-end systems prevent swapping LLM components or TTS engines

Deployment constraints - Often cloud-dependent with limited on-premise options for data-sensitive industries

STT + LLM + TTS Pipeline

Pros:

Maximum flexibility - Swap OpenAI for Claude, change voice engines, or update STT models independently

Comprehensive language coverage - 100+ languages with extensive dialect and accent support

Enterprise-ready - Proven reliability with separate optimization of each component

Voice customization - Extensive libraries for brand-aligned voice selection and fine-tuning

Cons:

Higher latency - Multi-step processing creates noticeable delays in conversation flow

Lost emotional context - Text intermediary strips away vocal nuance, resulting in less expressive responses

Complex integration - Multiple vendors and APIs to manage, increasing technical debt

Conversational deficits - Struggles with natural speech irregularities like hesitations or background noise

Conclusion

STS is great for consumer-facing applications that prioritize user experience. It also shines in use cases where emotional intelligence drives engagement and real-time applications that require fast response times.

The pipeline approach is better for deployments requiring specific LLM capabilities and multi-language applications with broad geographic coverage, extensive voice customization, or brand alignment. With a range of open-source solutions, it’s currently easier to meet strict data sovereignty requirements.

More Resources

Blog: In-depth articles on AI workflows and practical strategies for growth

AI Tool Collection: Discover and compare validated AI solutions

Consultancy: Explore AI potential or make your team AI-fit

Agency: Production-ready AI implementation services